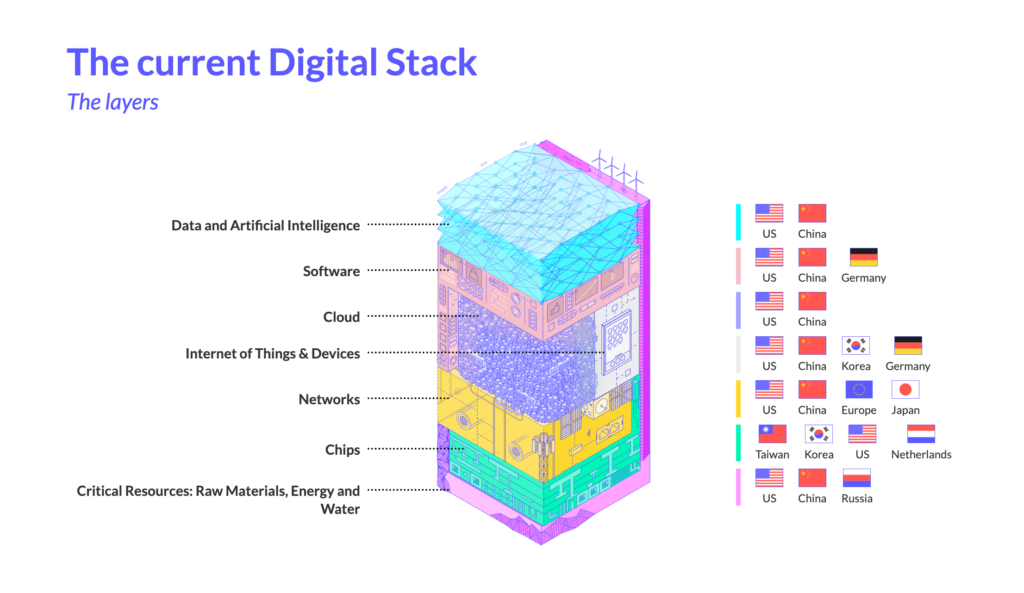

The title of this month is a little bit cryptic, but it relates to three relevant topics in AI that I will elaborate on across this newsletter and especially in the Market and AI sections. The picture is instead from Eurostack and is showing all the technology layers and who leads them globally to describe how the different things are interconnected. Let’s give one insight here, as this is the core theme I will touch on.

Autophagy is a process in biology that happens when we fast, and the body is self-cleaning, eating its own components not anymore relevant. This can lead to some cannibalization when it is going too long and too far.

The AI Autophagy, which I started to refer back to in October 2024 here, has been a way that the market describes a process when AI is trained with data coming from AI itself, resulting in a progressive decrease in the quality of content and a progressive explosion of data bias as replication and expansion of a noise distortion.

Agents can accelerate errors as they get chained, as the hallucination rate gets multiplied. I analyzed that more in the AI section with real market examples.

We see a progression in the area of Agentic, including Agentic ERP, cowork agents executing automatic activities, and clearly physical AI. I will elaborate more with clear examples on how this path has to be properly guarded to not land in a progressive descrease of quality of content.

Digital sovereignty, finally, as the third component of the title, is about how we build or source all the stacks of technologies to gain value, and AI is at the top of a complex chain that is gaining influence by different topics, including energy, water, and disruption from wars, as we saw recently, and can lead to different outcomes.

I believe they are influencing each other. Agentic trend is pushing toward automation and shifting workforce toward autonomous agents, the reuse of data, sometime even synthetic, is generatic a bias in decisions from agents that is exploding in long chains of decisions when an output is used as input for the next agent, the attention on the sovereignty is influencing how an industry can consume those agents in their core business without risking to lose business continuity if those agents would be made unavailabilty or even worst unreliable.

Quick Executive Takeaways

Key technology priorities to focus on for your business at this stage, if not already done:

- BPO & Outsourcing: Reassess outsourcing contracts against AI agent capabilities. Consider an insourcing strategy for well-described processes where agentic automation reduces dependency on external providers

- ERP: Start to execute on IT/OT integration within a modern agentic approach

- AI: AI Governance, link AI initiatives to value creation rather than spot use cases, differentiate strictly between initiatives for AI augmentation, AI automation, and reimagined processes) with AI. Design a stack for multi-AI composition.

- Multi-cloud: strategy update with repatriation and sovereignty considerations

- HR: up-skilling the entire workforce on AI-driven training platforms

- Cybersecurity: Initiate roadmap on Post Quantum Cryptography (PQC)

If you like to understand more about what is behind these recommendations, feel free to dive into the different sections of this and previous newsletters.

If you like to have some support or an independent perspective, feel free to reach out to me.

Market Evolution

Chipsets & semiconductors

The Silicon Foundry War

Since Feb 2025, I predicted here the possibility that the US Administration would push to revamp Intel’s production, reducing the gap of competition with TSMC. As mentioned in the former month’s newsletter, about the approach to the Angstrom Era, we are seeing an acceleration in the mass production of 1.8m nodes from Intel, the 2nm for TSMC in Arizona, in a clear competition. This is relevant because going to the 2nm level is granting a 25-30% of power consumption reduction and in times of rising energy costs, this seems a perfect justification for the change. We also see other players like Meta coming with their AI chips at 1.8nm for training and especially reasoning.

Meanwhile, Europe is also developing around semiconductors within 2nm, driven by demand for IoT sensors and connected cars.

Big Techs

AI or not AI

A lot has happened in the last few months in the AI world. Anthropic’s resistance to the Pentagon for the restrictions on Claude for war control purposes, the following inclusion of Anthropic in the list of the supply chain risks for the US Defence, partially postponed, a different approach from OpenAI, Google, and other big tech, with consequences on some key leaders of AI leaving, as well as a shift of users toward Anthropic.

In the same period, OpenAI went for a further round of funding for 110B$ from Amazon, Nvidia, and Softbank, continuing to loop circular investments and generating also some conflicts with historical funder Microsoft, which was considering the possibility of suing OpenAI.

At the actual plans, OpenAI targets revenue for 280B$ in 2030, being so far on 13.1B$ and planning to spend 600B$ by 2030, putting a question on the long term sustainability of all this, especially while some innovation are coming on top, like last year Deepseek with distilling capabilities, open source approach and making cheaper LLM on older AI chipsets and most recently Google with TurboQuant, potentially accelerating the vectors search using compression techniques and achieving faster results with less computation power.

The last several months saw a clear shift from OpenAI to Google Gemini, which gained several million users. Most recently, Anthropic, considered by the Pentagon to be the most advanced LLM provider, gained some further ground. There is a risk for OpenAI to be losing money at an unsustainable level, and the fact to be covered by big tech names is granting some level of stability, but it is not making the overall model sustainable in the long run. A few days ago, Sora, the video platform from OpenAI with agreed clients like Disney for a 1B$ platform, announced to close its service as it was simply not sustainable due to its cost.

Microsoft on its side, that I was questioning months ago here on its strategy depending too much on OpenAI for Copilot, and late on its investment on own LLM, recently started to add Claude, to Copilot, with limited capabilities and from April is embedding further capabilities like Claude Cowork but still so far having only 6 millions of active users on Copilot with the contenders OpenAI and Google on several hundred millions. The fact that Google gained a big portion of the users recently, after the release of Gemini 3, has also influenced a shift in the Microsoft AI leadership to focus on development, which is making me assume there will be more investment back also to own LLM and a tentative attempt to make Copilot more usable than competitors.

My Thoughts: The landscape of LLM and AI leaders is running at a fast pace, with innovation on a monthly basis creating a strict competition and shift of power. Decoupling, like Microsoft is doing to offer to clients a product that can consume different LLMs, can be a way to grant long-term stability, but only if it does not get commoditized by the limitations of its features versus the contenders.

My Thoughts 2: From my development in AI coding generation in the different LLMs, I have found tighter limits on token consumption from Anthropic and Google, basically reaching quite quickly the hourly or daily limit for a certain number of tasks within a certain service level, while OpenAI acted with much higher limits. This could be a way to retain clients with higher consumption possibilities, but is making at the same time the product potentially money-losing, burning too high rates for what gets charged, and definitely at one’s own risk in the long term. Having a single AI provider strategy and not being prepared for a swap could be highly dangerous as we embed more Agentic AI in the business processes that could be impacted by an operations interruption, especially if an enterprise builds a core process on top of a service that could be discontinued.

My Thoughts 3: The elephant in the room is maybe more about the fact that many of these AI contenders are also SaaS providers of services that are user-based. As AI agents rise, the user-based consumption will naturally slow down, and the two things cannot necessarily balance at the exact same time. Someone is calling it the SaaS Apocalipse.

Digital Sovereignty

How communities and countries react to big techs

I referred last month to the Visio alternative to Teams and Zoom, which has now pushed over 2.5M people in the public administration in France. On top of this, in the previous months, I mentioned countries that started to push back against AI engines not compliant with regulations like pornography and social restrictions below a certain age in Australia. This month, we also see the acceleration from US citizens, against Meta and YouTube, for the addiction of their children.

Parallel to this reaction from the population, the EU is organizing itself to build its own technology stack over the coming years. As I mentioned for several months, building your own technology stack is not impossible, but it is not just moving to an EU cloud that solves the challenge, but is a multi-year and multi-level investment. A great analysis was done last year by the EU Commission in this well-developed document from Euro-stack.

Reflecting on my former newsletters’ reference to the complexity of a European Stack and the effort that China has made over the last decade in this sense, let’s focus on the current digital task.

What is clear here is that each layer depends on the underlying, and the gaps in a certain layer must be leveraged, where possible, building those capabilities or finding sources for them. Clearly, a sovereignty requires that a proper overall stack can be granted without external dependencies. And for each of these layers, there are several components influencing them. Imagine only that in the area of Software we have Operating Systems with not a single one owned in the European market, neither on mobile nor on computers.

ESG

Energy

Energy, water, and the impact from AI and wars, the perfect storm

In October 2024, I was assuming that the energy restrictions in those countries that would decide not to pollute, like the EU, would influence their capacity to deliver on AI, and the datacenter costs would differ in those places with different sources less commoditized.

The dependency AI -> Chips -> Energy, even if oversimplified, is quite accurately describing a chain of risk, and the increasing demand for energy from datacenters, generating a huge gap between demand and offering, is making the overall cost of energy surge, together with the war’s influence on crude oil availability.

This impacts datacenters power and cost to service, impact the pollution extra generation to try to compensate the energy demands, and also indirectly some services energy intensive like the crypto market making them more expensive to be mined, reducing the investment and the valuation and influencing those markets, as we mentioned last year, that invested for example in Bitcoin for example for pension funds.

Water is the other element that I didn’t touch in the past, but is together with energy part of the equation. We saw that datacenters are consuming a large amount of water for cooling, and even if ideas to shift to space could be a natural cooler, it is also true that it would not solve the level of computation demand that is generated by AI, together with the lifecycle of innovation required. It has been proven that water shortage is also used as a combat strategy during conflicts. We saw that even recently, in the Middle East, water production, for example, impacted desalination and influenced the overall water availability. This, together with energy production are strategic target that can influence the entire technology stack efficiency.

Most recently, we saw that data centers are also gaining attention as critical targets during conflicts, introducing a new level of impact difficult to mitigate, as recently happened to Amazon.

My Thoughts: The AI automation brings a shift in some activities from humans to machines. The agentic approach introduces a further level of automation. The risks driven by targeting datacenters and shortening energy and water availability, indirectly impacting datacenters, are raising attention on the need to distribute workloads properly and balance risks impacting business continuity. This overall cocktail must also consider the fact that the distribution of risk needs to keep in consideration also the geopatriation and the digital sovereignty in that equation.

Cybersecurity

When the challenge comes from inside

This month, I wanted to add a small reference to cybersecurity, mostly for one reason. The recent Anthropic Mythos, the rumour around it, and the fact that Claude could be used to threaten code and be used as a cybersecurity hack, it’s an interesting aspect to consider in how the AI must be properly regulated and guarded to bring value rather than disruption.

AI

Agentic

Business Process Outsourcing (BPO) vs Insource

Last September here, I focused on the speculation that the acceleration of AI Agents executing operational tasks would be applied first to those well-described processes and would definitely target the BPO market. My thought was linked to the fact that companies could take the chance to insource back activities and potentially disrupt that market. Now this is getting more visible in the outsourcers’ markets. This type of paradigm shift is happening very fast, and the loss of contracts from BPO toward in-source back has the potential to leave the BPO market challenged on how to reinvent and reallocate potentially up to 80% of their resources.

My Thoughts: BPO providers will have a few years (considering the average contracts lifecycle) to adjust and will have to find faster ways to keep commitments in sharing efficiency with clients on a progressively more automated market. The AI agents are impacting the providers in terms of how to reallocate that 70-80% of resources back to other clients in a market that will not demand potentially human resources for those activities. The short-term strategy could be focused on vendor-locking, creating a dependency on other services. The long-term approach needs to find ways to justify a shift toward more value-added human services, including data quality enhancement, new revenue streams ideation, and creative improvement of service not part of the normal AI improvement process. All this, by the way, will not balance the headcount lost due to repetitive tasks automation and will potentially force a shrink of the size of those major BPO providers.

Agents Cascading hallucinations

One effect of compounding agents is that each agent has a probability to hallucinate, depending on the specialization, the topic, the training, and the way the prompt has been precisely set. When humans are in the middle of the chain, they can reduce the risk of hallucination with the correction effect, while when there are long chains of agents acting autonomously, a hallucination can compound and bring a quality reliability in the output that is rising quickly as the length of the automated chain grows. As more the data used as source can be biased, the more the compounding can accelerate the unreliable result. For this reason, chains of verification are accelerating to validate the quality of the outcome in the intermediate steps and avoid the noise from increasing too much.

Autophagy – the biased rise

The fundamental point of this newsletter is about the risk of levelling and commoditization of the results. An interesting article on this effect was recently published by HBR with the codename of “trendslop”. It’s referring to a problem that I’m indeed analyzing from time to time about the quality drop in content that can come from a misuse of AI and a lack of the right level of human intervention in the loop. Let’s follow my thoughts until the end of the section to fully overview the risks I see to take into consideration.

My Thoughts: As we develop further the automation, we will have challenges to run those AI agents due to internal and external factors, and it is relevant to design and operate them wisely so as not to be surprised later.

We already have some external factors that can be a challenge, like having core business processes depending on an external LLM that could be unavailable or unreliable in their answers and impacting core decisions and that is possible to design agents to avoid such side effects. We discussed the possibilities of acceleration of digital sovereignty due to the risk of service unavailability, to the risk of sensitive data leak, and this is influencing the generation of more LLMs, also built reusing contents from other LLMs like Deepseek did with the distilling technique from OpenAI and others. This could get even more relevant with the acceleration of edge LLMs, smaller, more vertical, local, and potentially trained ad hoc for a specific purpose. More contextualized but also more in search of specific trained data.

The mix between more specialized content, together with copyright infringements not addressed by LLMs’ training in several cases, combines with the challenge of bias coming from using synthetic data for training. This is why several platforms opened on the usage of the contents of users for training for their own engines, including LLM for consumer usage and also business ones, unless formally opted out, while some other platforms started to push back on the contents generated by AI that were impacting their own producers of real content.

So there is a possible trend that original contents will be context-rich, will lower bias of error in training, and will be more valuable than AI-generated contents.

But we also have internal factors. When we automate our processes, and we have an AI agent that is continuously optimizing outcome targets and redesigning the processes, the shift of an organization is toward how to optimize that outcome target and personalize it to make it more competitive than others. The progressive automation and reuse of common data and best practices, and AI optimizations are potentially leveling the output and reducing the differentiation. Same ideas from infinite loops on the same training base, same optimizations, same results, no differentiation. This is where to intervene to keep that weighted added contribution to differentiate in the overall value chain generation.

GG

From this month, the newsletter is republished not only on LinkedIn but also on Substack, and allows comments on both.