This month, the key leitmotif of this newsletter is how the cybersecurity advancements and their corresponding threats due to AI can influence enterprises’ stability as they combine with the workforce lack, due to layoffs and missed upskilling.

I explore a set of convergent aspects, and my thoughts are driven toward the future possible consequences of the changes we are experiencing now from the combination of events that are producing multiplying factors. At the same time, I also refer to the many former newsletters’ predictions that are getting reflected in today’s situation.

I see an imbalance between real-time threats, progressive AI agentic approach, generating autonomous processes depending on high-energy-demanding AI datacenters, and a lack of a competent workforce to intervene if something goes wrong. This overall mix, combined with an accelerated agenda to shift enterprises to be AI transformed, introduces risks of unexpected behaviour that, if not properly captured early enough, can introduce complete down of service, compliance and reputation problems, and increased threat exposure.

Quick Executive Takeaways

Key technology priorities to focus on for your business at this stage, if not already done:

- Transition toward AI Global Business Services & BPO: Reassess outsourcing contracts against AI agent capabilities. Consider an insourcing strategy for well-described processes where agentic automation reduces dependency on external providers

- ERP: Start to execute on IT/OT integration within a modern agentic approach and composable architecture

- AI: AI Governance, link AI initiatives to 2-3 business domains rather than spot use cases, differentiate strictly between initiatives for AI augmentation, AI automation, and reimagined processes with AI. Design a stack for multi-AI composition.

- Multi-cloud: strategy update with repatriation and sovereignty considerations

- HR: up-skilling the entire workforce on AI-driven training platforms

- Cybersecurity: Initiate roadmap on Post Quantum Cryptography (PQC)

If you like to understand more about what is behind these recommendations, feel free to dive into the different sections of this and previous newsletters.

Market Evolution

Chipsets & semiconductors

The US seeks to strengthen its own digital sovereignty

The actual news tells us that Intel improved consistently, and the stock value reflects that, thanks to the acceleration of 18A (1.8nm) chipsets, strong support from the government, and a progressive partnership with other big US enterprises like SpaceX, xAI, and Tesla in the strategic project Terafab to build AI superfactories. More than one year ago, in my February 2025 newsletter, I predicted that the US administration would push to revamp Intel and reduce dependency on TSMC, and I was expecting Intel to grow. I was trying to figure out with whom such a partnership would materialize. Looking at how this is going, it’s indeed reflecting a more US direction-centric.

In the same newsletter, I was also referring to the AI chipset export restriction regulation that would come in act to limit the export of advanced AI technology to foreign countries like China, and analyzed over several my former newsletters the aspect of building a two-speed world with a consistent digital divide in those capable of running under 2nm lithography low energy demanding chipsets, optimized for AI, and those limited to older technologies.

The evolution of restrictions on export of the US’s most advanced chipsets, last year, created a debate I already spoke about, also from the Nvidia CEO, mentioning the limits to access the Chinese market. This year, this topic evolved with the introduction, in January, of a Remote Access Security Act as a reaction from the US government to the fact that many of those most advanced chipsets, even if not exported, could be accessed via cloud from third-party countries.

My Thoughts: Looking to a digitally connected world, building long-term digital walls to restrict access to exclusive technology is hard to sustain. I remember one year ago, I referred to those North Korean workers using laptops in the US to access jobs and work remotely, showing that creativity can find unthought-of paths. I just believe it will be hard to limit remote access to some advanced GPU technology in the long term, as many escamotages could be progressively introduced.

Big Techs

David grew into Goliath

The last few months have seen a shift in the LLM providers’ leadership, also driven by the Anthropic and Pentagon conversation, which strengthened the consideration around Claude’s capabilities, and by the attitude of the company. The fact is that currently Anthropic is accelerating on gaining funds, and its own valuation is soaring to $900B, surpassing OpenAI, and made in the same month a strong multi-year agreement with Amazon to secure 5 gigawatts to compute and a potential 40B$ investment from Google. This happened damned fast.

The new David is maybe Deepseek with their V4 release, that is optimizing inference costs dramatically and delivers a much more cost-effective solution than competitors, also with the contribution of Huawei, strengthening its own Chinese capabilities.

My Thoughts: The general competition across countries and the acceleration of open source models with improved efficiency are generating a potential breach in the undisputed power. I will touch more about it from the cybersecurity angle with AI, and where I see this influencing the acceleration in the market.

Digital Sovereignty

A neutral perspective

A very dense and relevant analysis is the 2026 AI Index Report from Stanford, that is giving a relevant picture from different angles. I recommend a deep dive into it fully to gain the right picture.

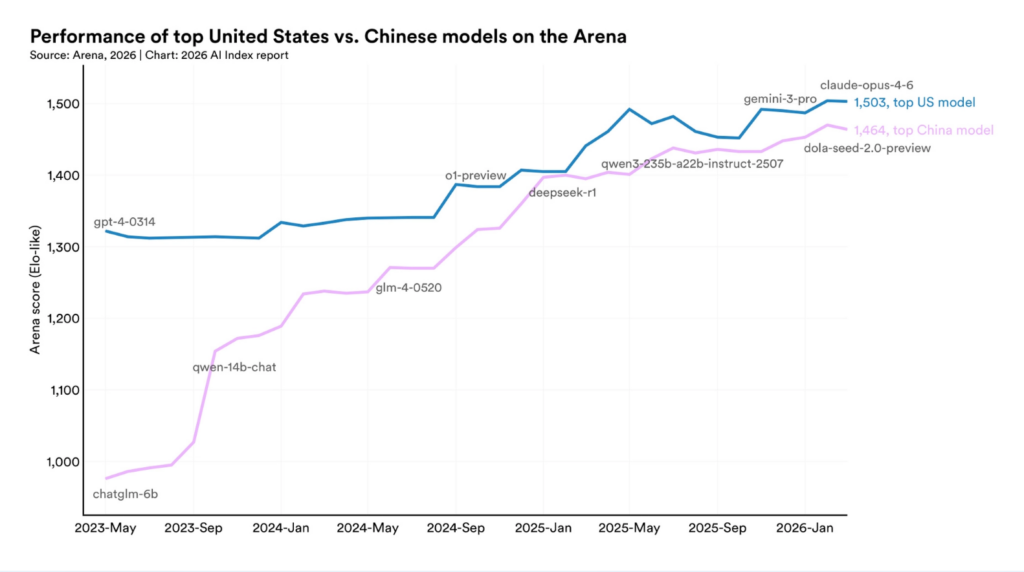

First of all, the gap between the US and Chinese models has consistently reduced over time, showing that the David and Goliath comparison in the former subsection is not that wrong.

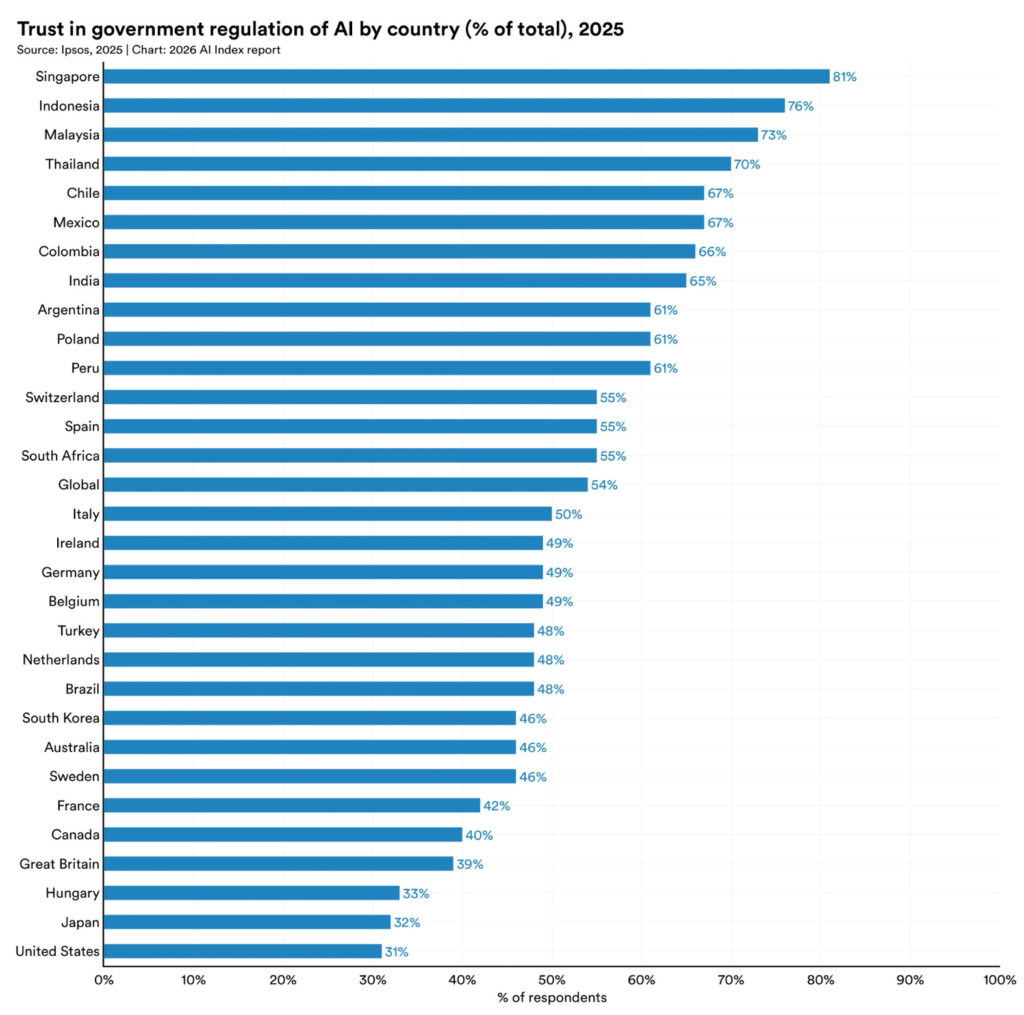

Second, as I reported a few months ago here, a true transformation in AI requires the right level of regulations and acceptance by the population to avoid incurring challenges like the ones with Socials ban for adolescents, as I mentioned in previous months.

In this sense, also here the Stanford analysis is brutally clear, about the trust in regulations on AI from governments in different countries.

At the same time, over the last month, we have seen the European Commission accelerating toward a Sovereign Cloud tender, indicating a ramping demand for more control and governance.

Palantir, at the same time, published a mini manifesto of 22 points on X describing their philosophy for the future, also involving AI, that is posing, from my perspective, a serious evaluation around match with EU key funding principles.

My Thoughts: As the European Community is entering a process of tender that will require progressively more understanding of those many layers where dependency on foreign technology will still be a fact for several years, it will be strategic to differentiate on which layer to focus first to grant independence and business continuity, but also which layer is conflicting with the key values the EU is built on and can’t be invested further even if is not directly impacting on the operativity.

ESG

Energy

The domino effect

We observed last year the blackout in Spain, which was apparently generated by a late reaction from the grid due to an imbalance with renewable energies. Recently, I reported how often some countries started to be challenged in the production of energy, often consumed by AI datacenters. Over many former newsletters, I reported the concerns of different countries, in some cases related to further pollution generation, and always influencing the population’s energy bill, trying to regulate and limit the proliferation of AI datacenters. That influenced different cases, like the recent OpenAI Stargate in Norway, and is accelerating the self-production from Big Techs, which we already discussed a long time ago, to limit grid impact.

The upcoming heating season is predicted to be the hottest, with people struggling to travel due to fuel shortages for flights and potential energy peaks that cannot be compensated for quickly. That would be at the same time as a progressive increase of AI consumption, not yet mitigated by new AI chipsets with lower energy consumption that will require time to replace the actual workload of existing datacenters.

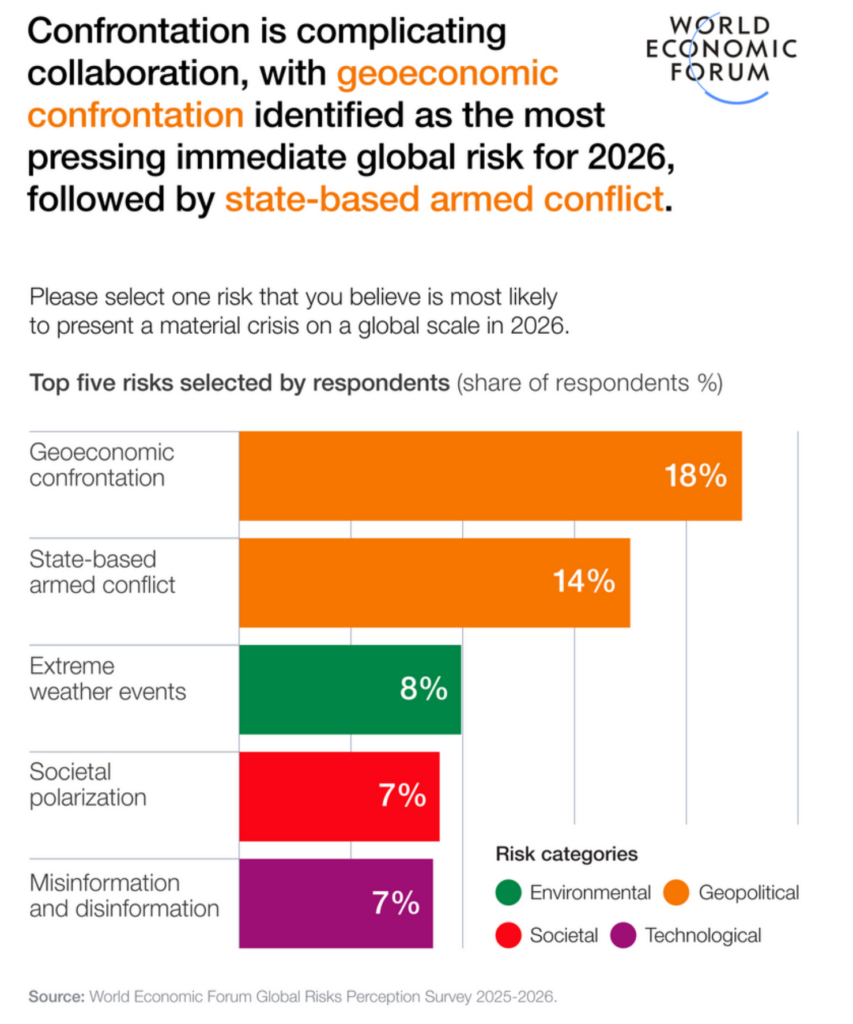

In January, WEF was publishing the updated report for Risks, so well before the war in Iran, and the risks exposure was barely the following

Now, considering that risks 1 and 2 are basically already happening, as well as risks 4 and 5, risk 3 is highly probable to come, especially considering the market predictions I just mentioned.

My Thoughts: The combination of an increased demand for power in AI, due to an acceleration of AI-driven operations, a prolonged oil scarcity, and a strong heating season could bring a strong imbalance for energy in some countries that could struggle to be contained. Hope to be wrong.

AI

Agentic

When the backbone fails

For more than one year, I’m not warning on the relevance of building autonomous agents through the right Agentic AI orchestration and considering the effects of combining multiple agents and the multiplication of errors when chained.

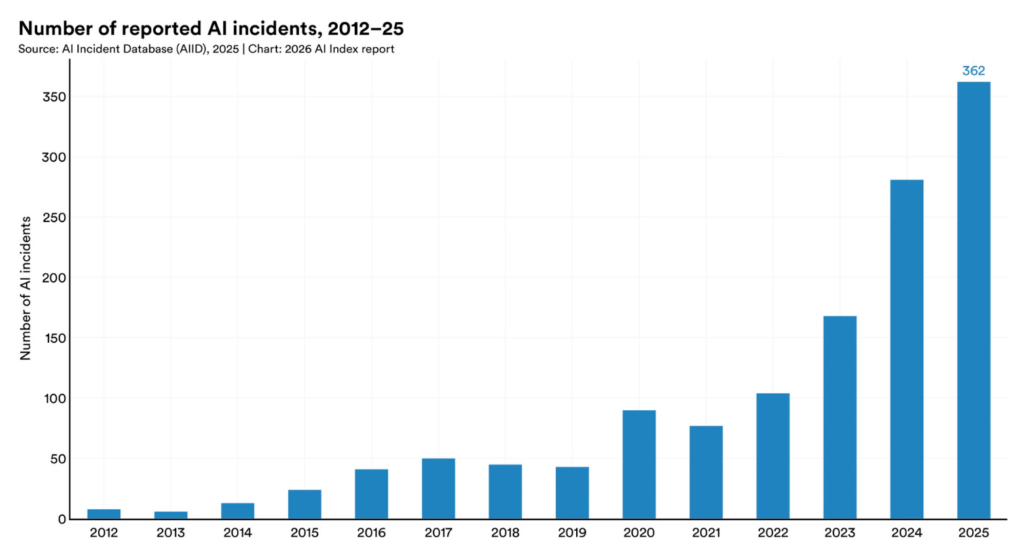

The history shows already a certain number of major services down due to automation. We mentioned months ago about the major Amazon outage. Most recently, there has been a certain noise about how fast a mistake of self-judgment from an agent brought PocketOS to a complete shutdown in 9 seconds, and in general, we look again at the Stanford report on AI, we can see an obvious increase in AI incidents.

Some of the protocols are very recent and potentially require improvement. Thinking of the MCP agent exchange protocol, it has been recently under consideration for potential vulnerability exposure, as well as LangFlow, one of the most famous open source orchestration layers. Those elements would pose a serious concern for the overall agentic approach in terms of security. On the other hand, as I mentioned in the past, these are protocols still in early maturity that will transform in the future and are already being extended regularly.

Some companies, concerned about the risk of such type of open integrations, played it safe by closing down to their own engine for agentic, restricting the way to operate with others, and granting a certain level of reliability, as SAP recently closed down its API policy. This generated a certain concern on the risk of vendor lock and the progressive closing versus a more modern composable architecture, allowing to integrate and orchestrate with third parties and the varied set of LLM outside.

My Thoughts: The risk gets more consistent when we attach some of the critical processes to a chain set of agents, completely autonomous, as their intrinsic stochastic behaviour must be guarded to avoid gaining a multiple effect of errors, bringing results outside boundaries. A proper human intervention to correct or judge can’t always be delivered in real-time, but it is a key element of the overall structure that companies need to build and operate.

LLM

The capabilities extensions

Most recently, new capabilities have been exposed by LLM, embedding agents directly in the offering, also capable of interacting with users’ desktops and delivering vertical competence in different fields, most recently in the Cyber world. This is the case of Anthropic, which built Mythos to augment their coding skills capabilities with focused training and gained, as a result, a machine capable of spotting all the badly engineered and implemented code, but also the security holes this opened up.

The fact that it has been limited to a restricted consortium, under project Glasswing, only driven by US companies, raises a question about how AI sovereignty plays in such cases when some public enterprises in a country have a potential way to identify internal but also third-party and competitors’ exposures that could be used for inappropriate behavior.

During this time, China also came up with its Qihoo360 to spot those major cybersecurity threats, and the hype ramped up as Mythos was identifying and proposing a fix for a bug in OpenBSD code, never been spotted over 27 years by any human.

My Thoughts: The big topic here is that no solutions can remain in a restricted consortium for a long time. After a while, some of the insights come out, or competitors reach the same point, and it comes to general availability, especially in the area of digital technologies. In the next section, I will address deeply how this can influence a company’s reliability from a cybersecurity point of view.

Cybersecurity

Defence and Threats

The Phantom Menace

Touching on the aspect of Cybersecurity and threats influenced by AI, let’s tackle the effects of the recent Anthropic Mythos, but also the Chinese Qihoo 360 alternative that is just out on the market.

The market is commoditizing the threats and making them virtually agentic with autonomous activities executed in real-time and expecting real-time response and mitigation.

The market of the 0-day threats has been historically limited to a certain set of threat actors potentially able to use falls to spy and steal content from companies and people, and potentially blackmail them.

This type of threat has been part of a deepweb even evolved in the normal market, with solutions able to hack products, phones, operating systems, and even entire governments, and is being used to support espionage between countries.

Now, the acceleration of AI engines able to identify threats is commoditizing this capability and potentially is making access to those instruments available to a much bigger set of actors, not always with good intentions. Reality is that such instruments are relegated to a restricted set of companies for a good reason, under the project Glasswing, giving time to generate accurate patching on a consistent, multi-layered framework. At the same time is also poses a question, from my point of view, because this set of companies is under one country jurisdition and has a potentially wide range of people with privileged access to an engine potentially dangerous. It’s also possible, and happened a few weeks ago, that a company like Anthropic can get hacked and unauthorized access to the Mythos granted to unknown actors. This can potentially be used to steal industrial exclusivity and other potential patentable technologies from other countries or even worse, attempt to compromise national security. Even with the biggest intentions, such a consortium should represent a cross-country reality to be rightly balanced.

Apart from this, there is another element of vulnerability intrinsic in several companies. As every company has a certain legacy kept over the years, bringing such a type of visibility can benefit from identifying and closing the top security holes first, and accelerating the closure of backlog, but also can identify and showcase risks that were not part of the initial overview. So initially, such a type of capability is definitely increasing the backlog, bringing unprecedented visibility.

At the same time that companies could realize they have a big legacy of applications with a big patchwork to apply, sometimes with technical restrictions, it’s coming out the effect of also new products built with oversimplified AI coding, sometime representing what is now the Shadow AI, much more impactful than the more commonly known Shadow IT, often replacing more mature but limited Robotic Process Automation with simplistic and potentially insecure new software as part of a weak or late AI governance.

As applying a fix, from a software engineering principle, potentially can introduce other bugs, it’s also true that touching old legacy software running stably can also introduce further bugs or unexpected behavior, and can have a big price tag, not planned on the business outcome influenced by some of these digital workflows running with unexpected behavior.

Over many months, we spoke about the effect of layoffs, driven by AI automation, sometimes in reality masking a way to silently lay off due to restructuring. However, the effect of a layoff during or before such a type of transformation can bring a consistent risk once those legacy applications have to be properly re-engineered, if not dismissed or consolidated due to a lack of proper, comprehensive know-how.

Even if those legacy applications don’t apply to the reality of an enterprise, the effect of a mass wave of corrections coming from the Project Glasswing will introduce a consistent level of changes imposed by third-party vendors that can influence the lifecycle of some products, requiring a certain quality validation by senior experts.

On top of this wave, it’s the IT/OT integration topic, getting more on the agenda as Gartner mentioned last October, for 2026 and further. As OT infrastructure has a different lifecycle than IT, automating the process to identify threats as an outcome of the Glasswing approach can also be extended to OT, which can also influence the level of integration and capabilities that a company can benefit from.

Considering that patching security holes in legacy applications is far more complex and impactful than building an automous agent to execute a specific task, as there is a certain productive workflow to validate for proper comprehensive behaviour, it’s key to keep in consideration the effort in front of companies with improvement of existing landscape including integration and reliability of process, and the need of right seniority of workforce to address those type of changes.

Post Quantum Cryptography (PQC) is something I have spoken about for more than 1 year now, and Gartner is reminding us that it has a deadline over the next 4 years to be completed. It means that applications in an enterprise have to be upgraded or replaced with PQC compliance level to be safe from that type of future decryption threat. As Quantum Computing is accelerating consistently in speed, it’s realistic to see a deadline that will not extend further but rather will shrink.

Finally, the AI poses a threat to organizations for the AI augmentation, as I speak in the next section on Workforce Transformation and progressive disengagement, causing the risk of workforce gaps or burnout.

My Thoughts: Companies must prioritize based on their challenges around the different dimensions reported here. As the AI is posing more threats on the spot and is accelerating the overall transformation agenda and potentially introducing more challenges in the early stage, I see a perfect storm for those companies that need to address many of these challenges at the same time, trying to decide the top tiers, and also missing key people due to different reasons. The years to come will be strategic to retain and upskill those key resources that will be lubricating an engine still in transformation.

Workforce Transformation

Engagement

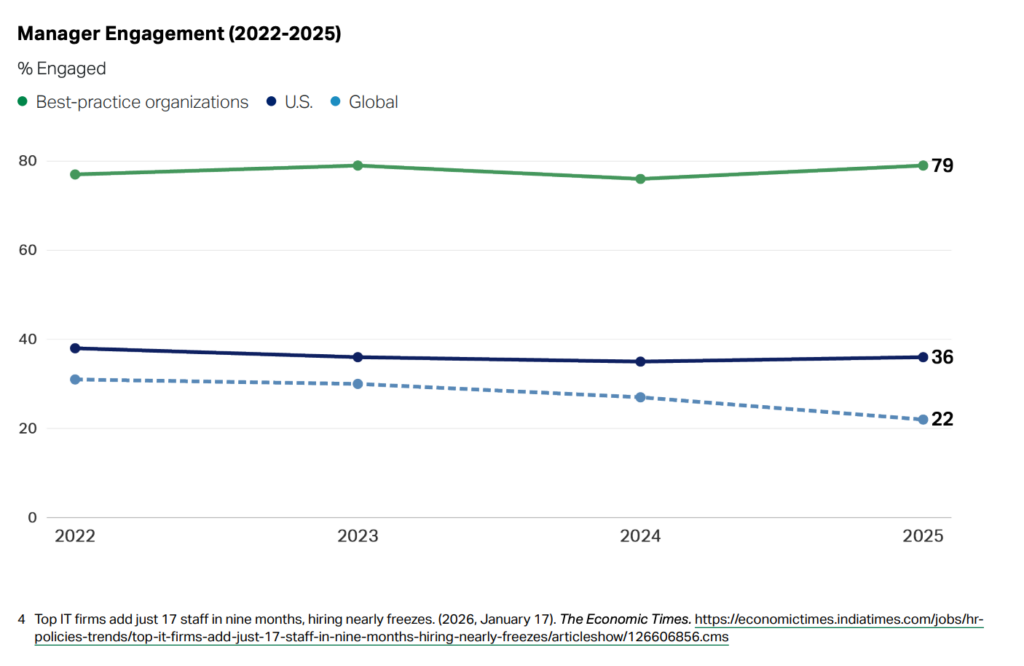

Analyzing the recent 2026 Global Workforce report from Gallup, it’s visible how the engagement reached a new low level, and most relevant, the engagement of management also reduced.

Looking at the details, it is visible that India drove a strong reduction, impacted by how AI is influencing its market. The next subsection on BPO touches on this point.

What is visible is the big gap between best-practice enterprises and globally average organizations in managers’ engagement, and the gap between managers’ engagement (22%) and employees (20%) tightened versus the past. The aspects linked to a lack of trust in leadership, weak talent management, combined with missed training, especially linked to AI upskill is generating a progressive workforce that is at risk of not actively collaborating for the future of the enterprise, also due to considerations of threats from AI and market instability.

The investment from the top in strategic leadership, investing in genuine development of leaders and employees, and being able to create the conditions for building a network of trust rather than a hierarchical empire, are building those cross-functional capabilities to bring trust across the entire enterprise toward common goals. Now, as never in the past, the rate of change requires building that type of engine of trust.

I recommend going into detail in the Gallup’s analysis, especially looking at the different countries and zones of the world and how differently they are acting under different dimensions.

Shared Services and BPO

The shift from Shared Services to AI Global Business Services AI GBS

Here I want to recall first of all my former newsletter of September 2025, in which I started to foresee that the AI would progressively reduce the BPO as we know it due to the automation coming, and companies could start to insource back some activities.

There are a few components in this overall element to consider. BPO has developed over the years as a way to outsource partially structured and low complexity processes with limited strategic value. This is the case of those processes not simple enough to be fully robotic automated RPA, but with a high level of predictability to be able to be given out and scaled by a low-cost human workforce with a consistent level of repetition.

At the same time, companies built some processes in shared services, gaining efficiency across enterprises for those common core processes, cross-standard, and well-defined.

The AI automation is raising the bar of how much can be automated, also embedding manual semi-repetitive steps, and as I was already mentioning last September, I would see that could cause an impact on the overall offshoring business.

McKinsey made a clear description of the shift from shared services toward AI GBS in their State of Organizations 2026, and they foresee an acceleration of more AI-automated shared services.

My Thoughts: As companies get AI more into their processes, I also expect that their outsourcers can reduce their costs as AI reduces their human activities, and clients will start to challenge for cost reduction. This is a challenging situation where the BPO is on one side having a surplus of workforce to reallocate, but not necessarily having demands to cover, and can try to play a strategy of vendor lock only in the short term. On the other side, companies can progressively decide to insource some of those processes not core, once AI is making cheaper to automate than offshore. This is a relevant trend from my perspective that will be relevant over the next couple of years.

Giuseppe Genovesi (GG)

This newsletter is republished on LinkedIn and on Substack, and allows comments on both.