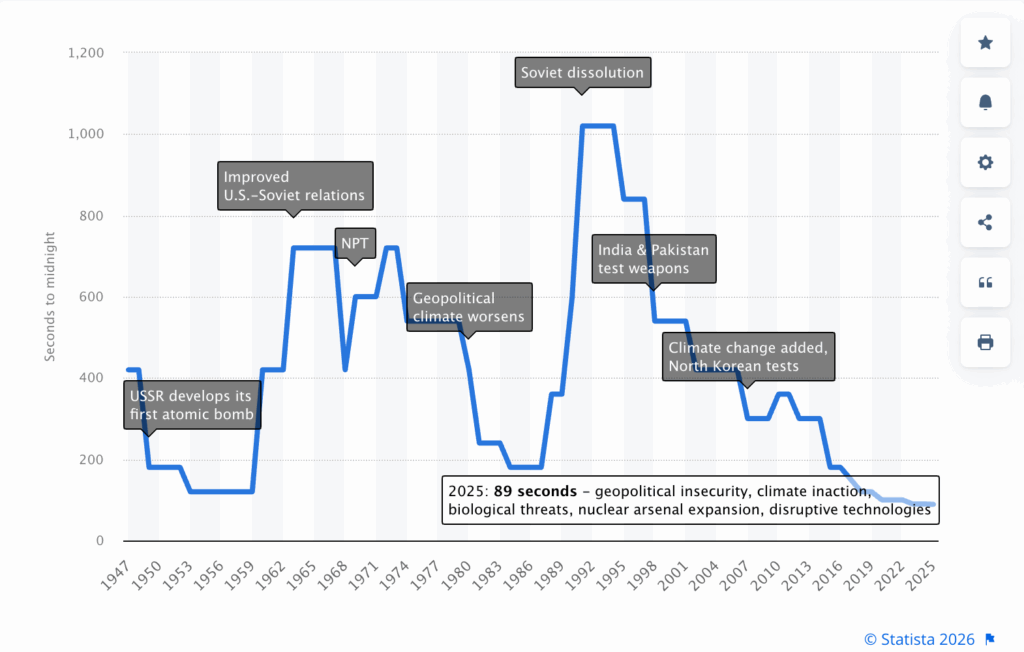

It was 1985 when Iron Maiden wrote the song “2 Minutes to Midnight” during the height of the Cold War between the US and Russia, when the Doomsday Clock dropped from 3 to 2 minutes.

We are now in 2026, well below that limit and 4 seconds lower than last year from the Doomsday Clock value when I reported it in my Feb 25 newsletter. The picture of this month recalls the Statista overview from when the Doomsday Clock was created in 1947, just to give a picture of how things have evolved, but also how fast they can be improved when there is a common focus.

In this month’s newsletter, I will focus also on some takeaways from the World Economic Forum 2026, especially related to AI, climate, and workforce transformation, obviously linked to the themes I follow every month and reflecting as usual on the past predictions.

You can find the full Doomsday Bulletin here and the video announcement here.

This is a long-form newsletter, hand-written, speaking about inflection points influenced by technology. It’s based on articles I find relevant to share and is infused with my thoughts. It’s for critical thinkers who love to hear complex correlations, self-reflect on the consequences of trends, and exchange opinions on how to tackle the best possible approach for the future.

Quick Executive Takeaways

Key technology priorities to focus on for your business at this stage, if not already done:

- Offshoring vs Reshoring: This remains a key topic to keep in consideration. Source back influenced by repatriation threats and easier automation of processes with AI. Opportunity with customer services, service desks, and shared services as primary, but not limited to

- Data Governance for IT/OT and full integrated engine

- Software Development: Using AI to make effective improvements of tests, code refactoring, and fast prototyping through vibe coding

- Agentic AI: Execute a minimal set of use cases to build in production with proper TCO for production size volume and ROI

- Clouds: Multi-cloud and repatriation considerations by service criticality

- AI Roadmap: Priority governance around guardrails and cybersecurity

- HR: Time to ramp up AI-driven training platforms for up-skilling the entire workforce on AI. It’s strategic to have a workforce AI confident in day-to-day

- Cybersecurity: Clear focus on roadmap for replacing/updating/consolidating legacy applications not supporting Post Quantum Cryptography (PQC)

Market Evolution

Chipsets & semiconductors

The Angstrom Era and the Silicon Paradox

The acceleration of 2nm production is ramping up and is going to generate a “digital divide” between those who are able to produce at this level of miniaturization and those who remain behind. From this perspective, some companies like TSMC, Samsung, ASML, Intel, are ramping up their foundry to be able to produce at 2nm (and some already at 1.8nm with perspectives to go around 1.4nm in a few years) and serving clients like Nvidia, Apple, and others, building the base foundry for their modern AI chipsets.

We are entering the Angstrom Era, that is requiring super advanced foundry capability to be able to produce as we approach the 1nm miniaturization level.

There are two key aspects linked to this last fact. One is that the 2nm production is going to reduce energy consumption up to 30%, making the future AI chipsets much more efficient; the second one is that the investment for such high technology is going to divide the world into those who can invest several billion in generating those foundries and those who can’t, creating an innovation divide.

There is also a side effect of such polarization versus the production of AI chipsets. A comprehensive overview from Deloitte here is raising attention on the fragility of the chipsets investment, considering that 0.2% of the entire chipsets production is AI, and they represent for those enterprises around 50% of revenues.

So this means that a small volume and really high margin part of business is driving the priorities of big chipset farms, while all semiconductors with lower margin, including chipsets for automotive and client devices like consumer computers, remain behind with top prioritization of semiconductors for servers, AI chipsets, and memories optimized for AI.

The development of chipsets with lower energy consumption and more AI capabilities is going to be a killer application for Edge AI, enabling the Physical AI that requires computing at the factory level for robotics in OT in real-time latency. This is strategic, as I mentioned last month in my newsletter on the scaling of IT/OT low consumption, but also linked to the ramp-up of autonomous Agents, as I was mentioning in March 2025 here, where I was reflecting in “What could happen?” on the possibility that more edge computation demand would accelerate physical AI.

The year 2026 is already identified by a competition for accessing chipsets, semiconductors, and memories, and companies that will suffer from a lack of access to them, again generating a divide in high-energy production, modern low-energy AI chipsets, and the capability to gain exclusive access to such production.

It reminds me of 2021 when the semiconductor crisis was driven by lowered production due to COVID, causing shutdown of factories and at the same time increased demand due to many people working from home and requiring more equipment, and companies urged to modernize their backbone, all depending on more semiconductors.

This time, the difference is that the crisis is driven by an increased demand for newer chipsets for AI, which is hitting the consumer market that is less served and will impact those businesses like automotive that gain less priority in the chain of supply.

My Thoughts: The fact that an AI-first strategy run is impacting many other demands and increasing the cost and the unavailability of other services, could cause an accelerated questioning of the benefits of AI, coming when many other things get short.

The energy cost rise due to AI consumption showed a concern from the population for datacenters for AI and tactics from companies to build without residents’ knowledge (more in the ESG section). The AI’s own development even impacted delivering services to clients, as you can see in the next section on Big Techs.

All these elements are indicating a way to progress, not really aligned between markets and population needs, which I feel could lead to the creation of consistent frictions as we progress. If earlier COVID was an external cause without much control, here the speed of AI transformation, especially if not linked to tangible benefits widely recognized, could be challenged by the restrictions and limitations coming in other contexts and by some governments.

Big Techs

When AI’s own development eats into the growth

The recent Microsoft financial results, even if going overall better than expectations in some aspects, didn’t create confidence in the investors, and the stock value dropped. About the tensions, one being the real adoption of Copilot from clients in the order of a fraction of the entire M365 users, it was often rumored in the past (you can find in a former newsletter the reference) that many users tended to use other tools, such as ChatGpt or Gemini, even having a Copilot account, finding the alternatives more effective.

A strong dependency on OpenAI (45%) for the backlog to fill up in the AI is also a reason for concern, and the need to invest in own usage of Azure to deliver AI, hitting clients’ capacity for Azure, was a final remark.

Apple decided to use Google Gemini to recover its own Siri development, which can indicate a mid-term strategy to re-enter the competition while accelerating. Apple comes from a late reaction on AI but an interesting development in the Edge AI with Apple Intelligence for the consumer market, with their own LLM on device capability.

Salesforce CEO Marc Benioff made a call for AI regulation a few weeks ago. The demand for regulation is a long-term vision from my perspective, to be able to follow on actions already happening in many countries (as I mentioned in January’s newsletter), from Australia and others on social platforms regulation, and from the EU with the AI Act. All these are clear examples showing a misalignment between the US Administration trying to have free regulation and a more strict market in the EU, regulated with AI Act, and most recently also ramping up in the regulation of social media.

SAP stock also dropped significantly over the last few weeks, as a result of concerns from investors on the capability to transition businesses to a new cloud model and from the fact AI will transform the way legacy software gets consumed and evolved. Here, I think SAP went late on a real cloud strategy and could benefit from AI for bringing more integration capabilities in the future to work more as a core component rather than competing to work as one comprehensive solution.

Oracle also showed a considerable impact on the stock linked to the increasing investment in long-term datacenters for Stargate, tied with OpenAI development. Here, the 500B$ investment is coming at the expense of other big tech at the expense of the workforce and putting at risk the exposure of services over the long term, and for this reason, a clear risk distribution is also from banks.

OpenAI is also getting pressure from the big investments they still need to make before being profitable, and some big “circular” deals are disappearing, like the former Nvidia-OpenAI agreement.

My Thoughts: The AI acceleration on one side is based on promises of improvement that are far from matching the investments made. They will require years to break even.

On one side, Microsoft with Copilot went all in on an approach that is today still used at a fraction of users, and most importantly from my perspective, they are missing a proper own LLM readiness, making them strongly dependent on OpenAI and Nvidia, while Google played stronger to decouple from others with Gemini (also using its own hardware on Tensor).

The level of investment exposure for future AI from Microsoft, Oracle, OpenAI, and a big backlog generated is impacting their own revenue, and part of this run could be highly influenced by regulations that progressively will ramp up over different countries.

The challenge for sovereignty, especially when we drop into the field of Agentic autonomous AI, is going to be an element that will force many countries to regulate and will influence the speed of evolution.

AI is definitely here to stay, but most probably will require some consolidation in terms of sizing, timing, and players, probably through several small bubble distress rather than explosions. Some industries have planned smaller AI budgets until a proper ROI is shown, some could slow down depending on their business and could influence the demand and cause some slowdown, but so far it seems that many of the big-tech have filled up their pipeline also for demands for the entire 2027, even if some have already suffered in some ad hoc cases.

Hard to say if there will be some harder stop-and-go in 2026 once some companies will have to review real benefits linked to agentic in operations.

It’s clear that not all enterprises have the same level of digital maturity, and based on the actual stage where they are, the real acceleration of AI adoption is influencing their overall plan. Others behind will have to invest also in AI, but will have to work more on improving Data, and that will influence investments more on the prerequisites.

Digital Sovereignty

France is doubling down on it

After the case of Airbus that I discussed in the former Newsletter on EU cloud sovereignty demand, recently, France raised the bar of autonomy, around Zoom and Teams to be taken away from government and institutions to reduce dependency on US Big Tech, rather than using a French solution, Visio (not to be confused with Microsoft Visio).

Some companies like Amazon accelerated in offering an EU Sovereign Cloud to answer to part of the independence required, but there is a big topic also considering that the US Cloud Act can force those US companies to grant access to data of clients stored also outside the US.

Most recently, Draghi suggested a way to federate Europe. I spoke at length in my October 2024 Newsletter about Draghi’s initial recommendations to invest in AI and Quantum Computing. If the option for a real federated Europe would come from a subset of those countries originally from the EU, that could justify creating some common technologies across all those federated countries and could make the overall picture of real technology sovereignty much more realistic.

My Thoughts: The complexity of a real sovereignty runs around many layers where MS Teams is just one of the most visible of a stack that goes through Operating Systems (for clients all US-based), mobile phones OS (practically all US-based), datacenters virtualizations and chipsets (mainly US), network infrastructure backbone (mainly US), and so on.

I don’t forget all business applications in the cloud that run most of the enterprises and are getting even more AI-dependent with the agentic approach.

The complexity lies in understanding what is realistic in the short and long term and how much of the short term this is creating real sovereignty or a false feeling of e2e control.

My main concern that I repeated over several months, is on the business continuity linked to AI if we have agentic executing automation and depending on AI engines that are controlled by countries outside the area of friendship. In such cases, blocking an LLM would make the agentic quick stop operate.

Even worse, an AI could reply with wrong information rather than not answering, changing the behavior and impacting, for example, operations for automated purchasing (just to take an easy example that came to my mind).

A point that will get relevant is to balance different sources, independent of how much possible, having an agentic system that really compares suggestions and decides over different input, but also a stronger edge AI computation, reducing the dependency for many operational activities by external AI not fully in control.

ESG

Energy

The secret datacenters

We discussed in the Big Tech section about the 500B$ datacenters transformation involving Oracle, OpenAI, and others from a point of view of risk of investment exposure. It’s interesting to see also the aspect linked to the energy consumption expectation that is coming from the prediction of an increase in AI. The expectation of energy increase is for +17% in 2026 and is going to continue until 2030 at a rate of +14%, with a potential 2,200 TWh demand. This analysis from S&P Global is quite shocking, as we are speaking about an amount of energy compared to the entire consumption of India.

I mentioned in the previous months how some US States residents resisted the installation of new datacenters as they saw the surge in electricity bills and pollution (for example, in the case of gas turbines I mentioned months ago for xAI). In at least one case, the approach has been to build in secret, in a former Crypto mining datacenter, the new AI capabilities to avoid residents’ reaction until the transformation was already done.

My Thoughts: The reflection here is similar to the one I did in the section on Markets under Big Techs. An aggressive approach that does not care for the residents is going to be a fire-back as it is not sustainable together with the surge of costs.

If on one side many big techs are self-investing for sustaining their own energy consumption, the level of explosion of datacenters is putting stress on the grid and will see some influence in the next years, as free regulation for building datacenters will get challenging.

Some countries released, as mentioned in former newsletters, the regulation, including missing geological impact analysis, but these fast approaches can fire back in the long run.

AI

Machine Learning, Agentic, Data

Autonomous agents beaming up

Even if Gartner warned that by 2027, more than 40% of Agentic AI projects will fail, it’s also true that being part of the 60% will be possible, identifying the right use cases, making a crystal clear TCO, and building proper guardrails around the AI and its evolution.

2026 is seeing the push for more autonomous actions happening, adding on top of robotic process automation (RPA), an agentic approach to automate some recurring activities. There are already some use cases in different areas, and those are simply getting more consistent.

The EU AI Act is going to have an important milestone to comply with on the 2nd of August 2026. By this time, the regulations set for compliance with high-risk AI will have to be implemented in companies, and those include several references to systems that could collect privacy data in terms of HR or social networks.

I mentioned in the section on Markets in Chipsets about the fact that AI can be accessible to some countries capable of investing more in innovation, rather than others, creating a partitioning of access to innovation. For this reason, I already mentioned last year my concern about an AI competence polarization, in terms of companies and countries that can have access to it widely. A few years ago, I was considering energy and its production as a limit to access to technology development, but we saw that sovereignty has put another variable in the meantime. Recently, WEF 2026 mentioned exactly the risk that an AI not properly developed across different countries can pose in terms of wealth distribution. I will go more into the section on Workforce Transformation about this.

My Thoughts: The run for AI supremacy risks being influenced by the effects of the removal of AI safeguards that some countries applied. Recently mentioned in detailed examples from the Atomic Board of Doomsday Clock, it requires one not to forget the relevance to comply with proper AI governance aspects, and that can cause a slowdown in the deployment of some solutions. The combination of sovereignty threats can be a further slowdown toward a run with limited conditions, at least to apply in some relevant markets.

Robotics versus Physical AI

It’s now getting more systematic, the meaning of Physical AI for all about the AI getting tight with robotics. It’s quite relevant as the evolution in AI is accelerating the evolution of Robotics in different physical contexts.

We saw that Japan is going strongly in this direction. On one side, Hitachi’s recent announcement in this direction, but also financial institutions like SoftBank finalizing the former acquisition of ABB Robotics.

The path toward a real Industry 5.0, as we started to touch on in our newsletter around one year ago, is getting concrete. The IT/OT integration is one of the key prerequisites that has already been getting more relevant from few years and getting more relevant to be a key piece of the overall integrated engine for every enterprise.

Quantum Computing and CyberSecurity

The year of Quantum security

In January 2025, I defined the year as the Quantum Computing year, as I saw that it was one year in which companies would make serious progress in quantum computing. Based on the acceleration of development in terms of modularization and new techniques to reduce errors from noise. Seeing the year pass, Quantum Computing made strong advancements, and the number of qubits that big tech could manage rose significantly.

From my perspective, we are still on the base of the ladder of Quantum computing, and we will see in the years to come the power, especially combining Quantum Computing with the Machine Learning developments.

One threat that is approaching us with Quantum Computing is that an improvement in Quantum Computing will be able to decrypt outdated encryption methods. This would apply to many companies’ applications and a certain number of cryptocurrencies using blockchains, not Post Quantum Cryptographic (PQC) compliant.

For this reason, the year 2026 is considered by many companies and big tech to be the Year of Quantum Security. As Gartner warned in the past and we mentioned also several times, there is the possible expectation that by 2030, many applications not PQC will be able to be hacked, and Gartner recommended a deep work around upgrading/consolidating applications to PQC in the next 3 years.

The actual acceleration we saw could make the agenda even more demanding, and for this reason is quite relevant that any company make a priority plan on the PQC compliance and start to act to not be behind.

My Thoughts: Quantum Computing will be a future revolution as it will get into tangible use cases like solving NP-Complete algorithms, but in the meanwhile can be a threat in some areas regarding encryption applied to many use cases. The focus has to be for companies to work around the remediation at an early stage to be not late on it.

Workforce Transformation

I keep evolving this section on Workforce Transformation from when there was the former WEF 2025 Future Jobs Evolution trend. I think it is relevant to keep updating the relevant aspects on how the jobs market is changing and how the workforce has to transform to be ahead of the AI transformation, embracing rather than suffering it. One key sentence we always heard is that the future job will be for the employee using AI rather than an employee without it. All the section is about making sense of this.

Workforce change

IMF and the skill change

Over the last year, and still happening, we have seen a strong reduction in the workforce across entities. All Big Tech companies, but not only them, started early 2025 as a result of resizing post Covid and post-over-sizing, sometimes playing the AI automation card rather than justifying over-sizing.

There is an interesting analysis I referred to last month from Fortune, explaining exactly this behavior.

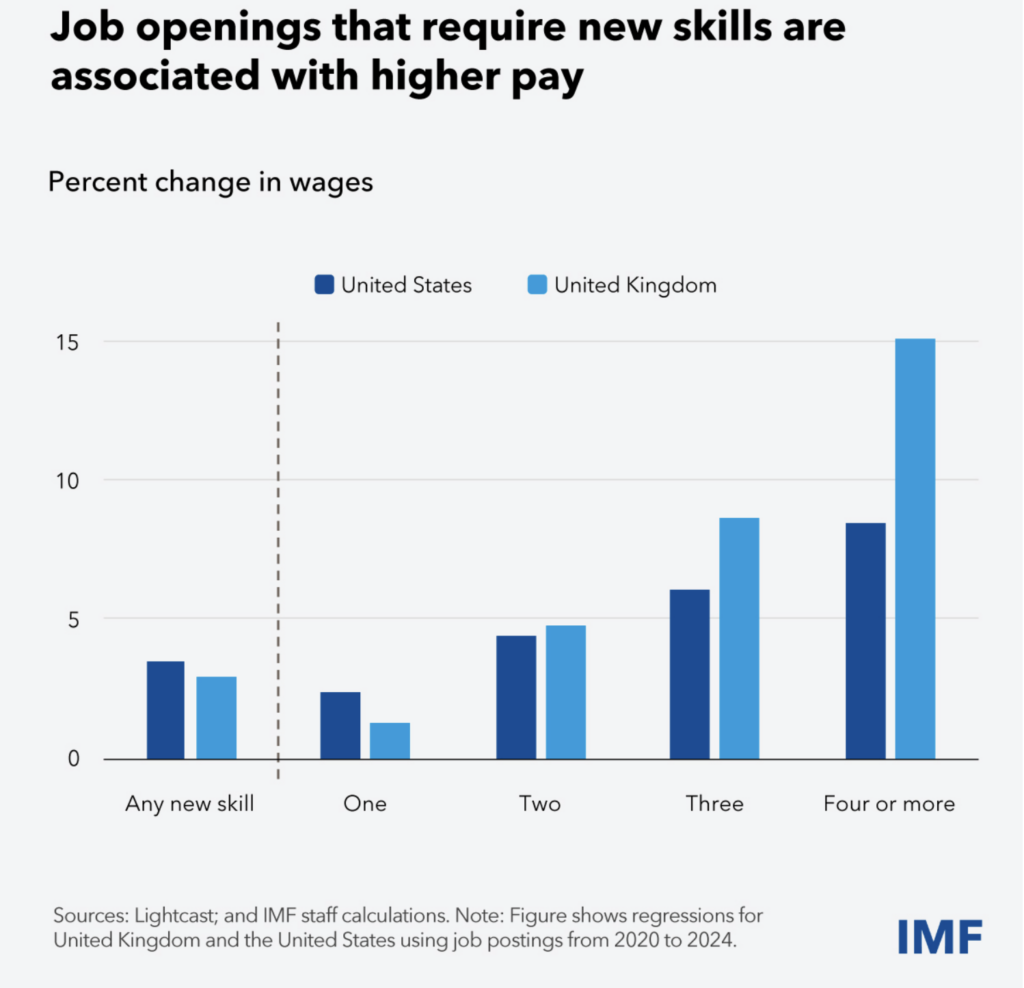

What the International Monetary Fund (IMF) published last month here shows a shift in the request for new skills and the opening to pay them also up to 15% more than previously, an indication of a clear lack.

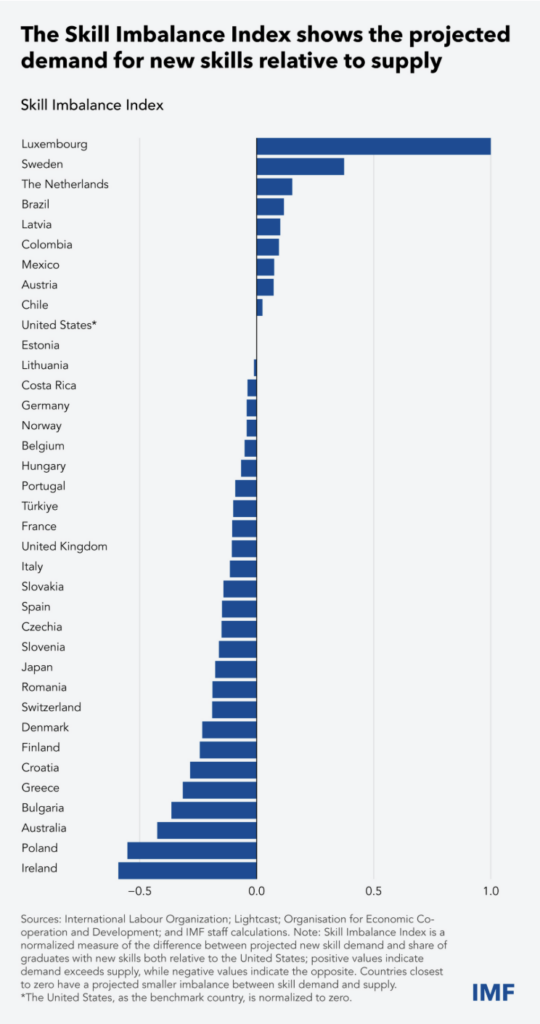

Parallel, they pushed for an imbalance index to show which countries have the most lack of new skills versus what is delivered from the market.

These results show a few key takeaways. Some countries (the one at the top of the list) have a lack of competencies versus the market demand, which is clearly going in the direction of training and up-skilling. The ones on the bottom are instead having a surplus of skills available but not having enough demand, positioning for exportation of those competencies.

Clearly, this imbalance between demand and effort can be balanced by proper people mobility if countries facilitate it.

Workforce Augmentation

Fear Index as an effect of unclear paths and unstable geopolitics

Strictly correlated to the former point on the skills vs demand imbalance on the market, WEF 2026 also pointed out from another angle on the job transformation and related workforce skills evolution.

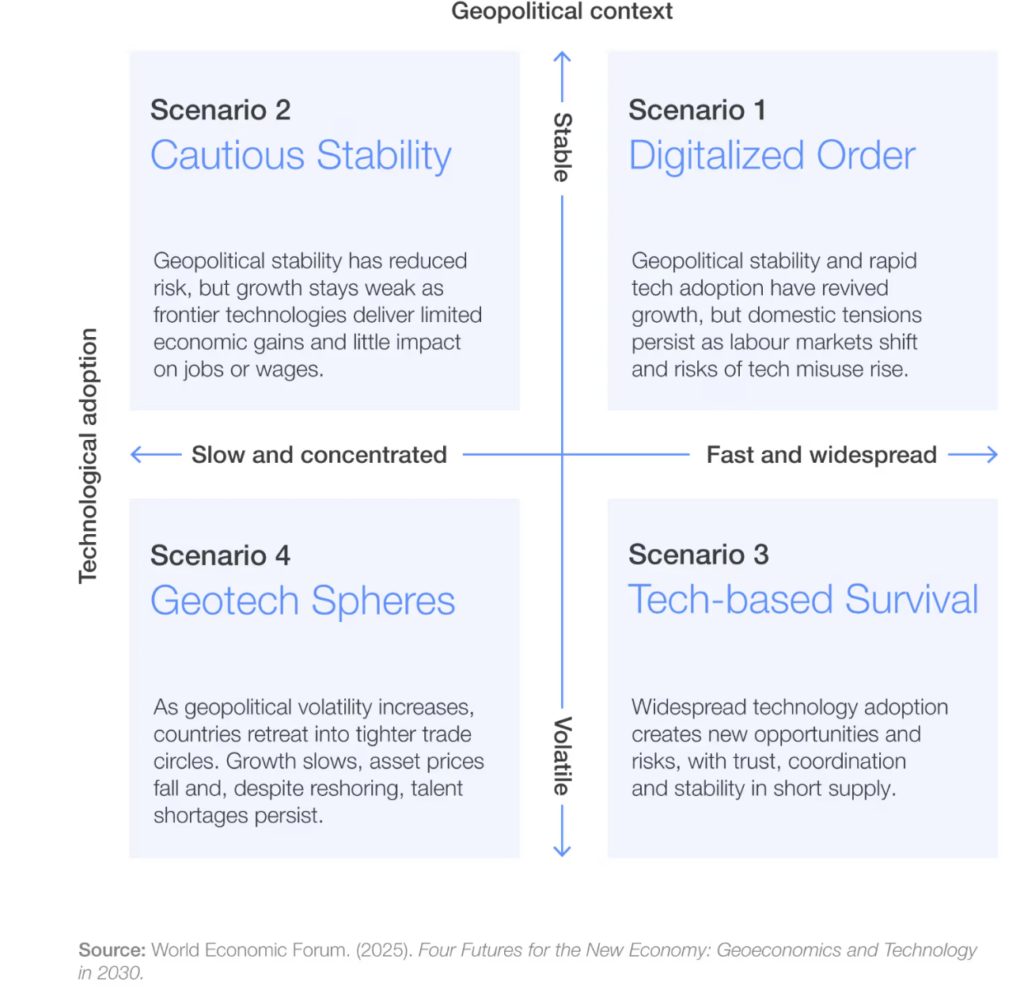

What I see as interesting from this different article is, first of all, the consideration of four possible scenarios based on the rate of adoption of technology and the geopolitical stability.

Looking at the different scenarios, it’s interesting to see that Scenario 4 is the one with the lowest technology change and work transformation and is driven by a volatile geopolitical environment with limited alliances. On the other side, Scenario 1 is the most transformational in terms of technology, but thanks to better geopolitical stability, it can allow the shift of workforce and absorb job losses through proper opportunities, also of mobility.

Looking at the former section of countries with an imbalance between skills and demands and correlating these scenarios, it’s quite visible that the geopolitical instability is highly influencing the real benefits coming from the technology. The situation of Scenario 3, while accelerating on technology, is also raising risks due to the instability correlated.

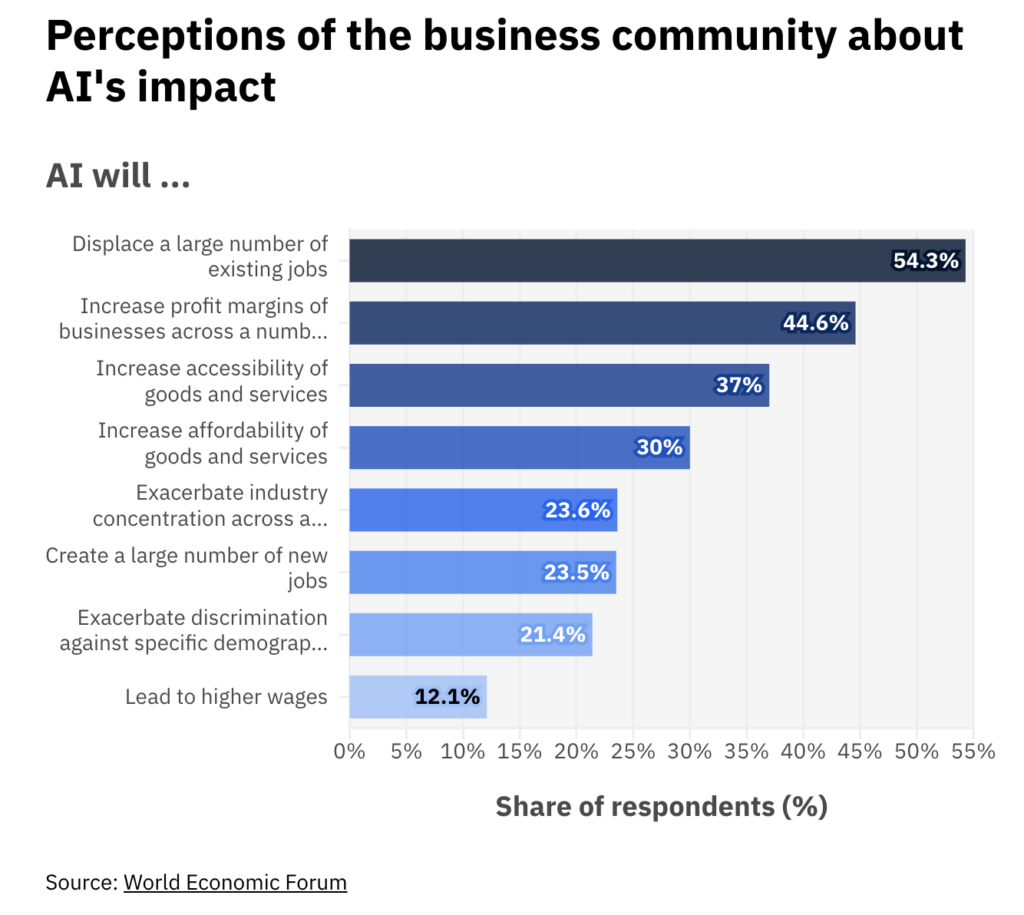

Looking at what the most impactful effects of AI are, that indicated most a big concern for job displacement that can be a clear threat to the up-skilling of resources.

My Thoughts: As I started to mention in the section Markets Evolution, the AI acceleration can be a threat or a growth opportunity, depending on how it is properly deployed in enterprises, but also how the governments cooperate around it.

The difference in job opportunities across different countries, an increased concern for AI, as well as geopolitical instability, can be a cause of slow adoption or introduction of considerable risks without proper regulations.

On the other hand, better stability in geopolitics can shift the situation to create better confidence and acceptance of technological change, including better mobility for people, which can also improve their leverage and value, opting for better wages for increased value jobs in more demanding markets.

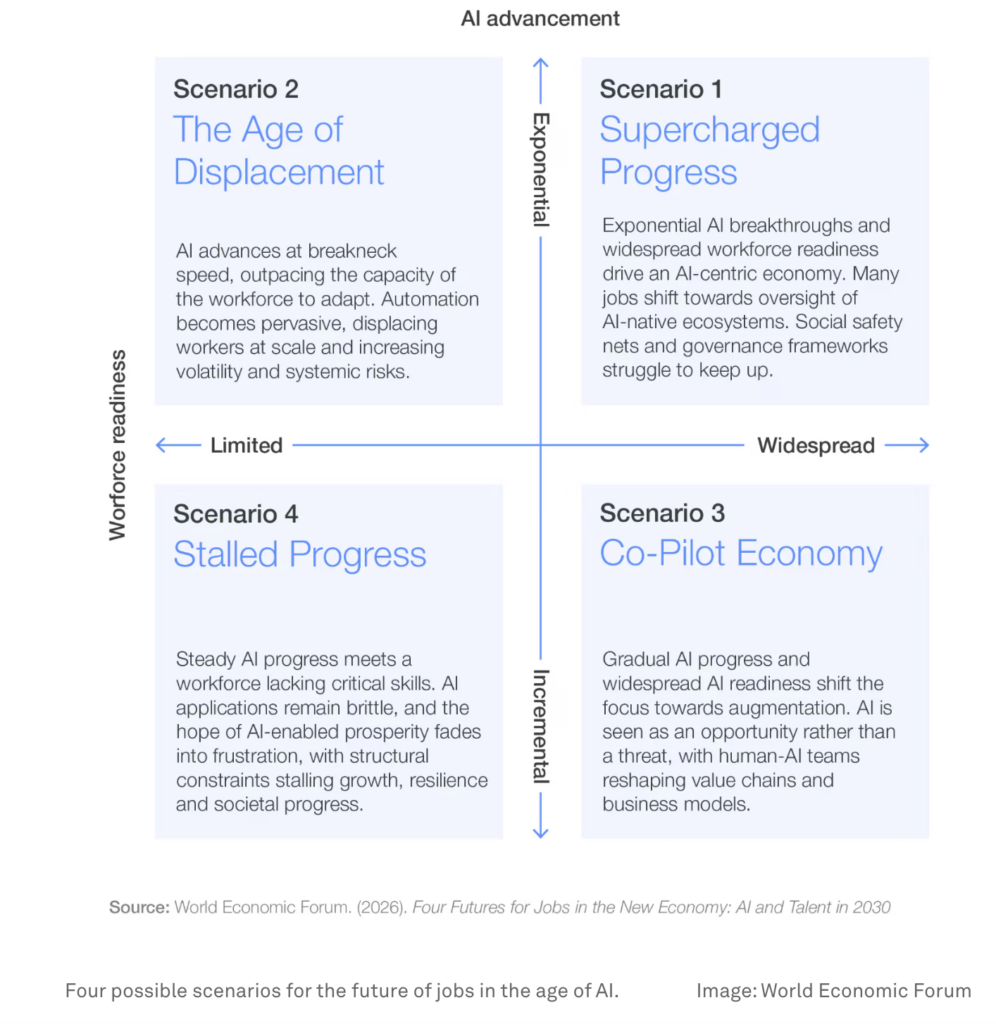

The four final scenarios I want to leave with, still from the same report, show that a workforce properly prepared, with the right up-skilling, supported by an acceleration of AI advancements, can bring added value creation lasting and can increase the level of economic growth as multiplication effect. It’s again a big change that requires coordinated actions to run effectively and last.

GG